Raytracing in Evergine

Introduction

Raytracing is a technology that allows us to improve the realist of our render. The more spread technology of real-time render is rasterization where for a long-time age we have been working to simulate all world details as different surfaces, light, shadow, reflection… On the other hand, raytracing had been a technology that allows us to simulate all these details of the real world with the best approximation, but it was very expensive to use in real-time renders.

In the year 2018, Nvidia launched its new graphics cards series with RTX technology that uses the hardware to reduce the computation cost of the raytracing technology. That allows us to use raytracing in real-time and offer us a good alternative to traditional rasterization technology.

Some visual effects as soft shadows, real-time reflection, or global illumination are hard effects to implement with traditional rasterization technology while is possible to get them with a relatively easier implementation using raytracing.

In the following years, 2019, 2020, and 2021 the ray tracing is spreading through other graphics cards vendors as AMD or Intel so the target is that ray tracing will be the standard technology in the future.

Raytracing technology is supported in Evergine as part of his low-level API. The Low-level API is a common interface with the most important graphics APIs as DX12, DX11, Metal, Vulkan, or OpenGL. Although the raytracing support is only available in DirectX12 and Vulkan backend by now.

The Evergine team has unified the DirectX12 DXR and Vulkan Raytracing APIs in a single and common API. So, you can write a project using Evergine low-level API and run it on the DX12 or Vulkan backends later.

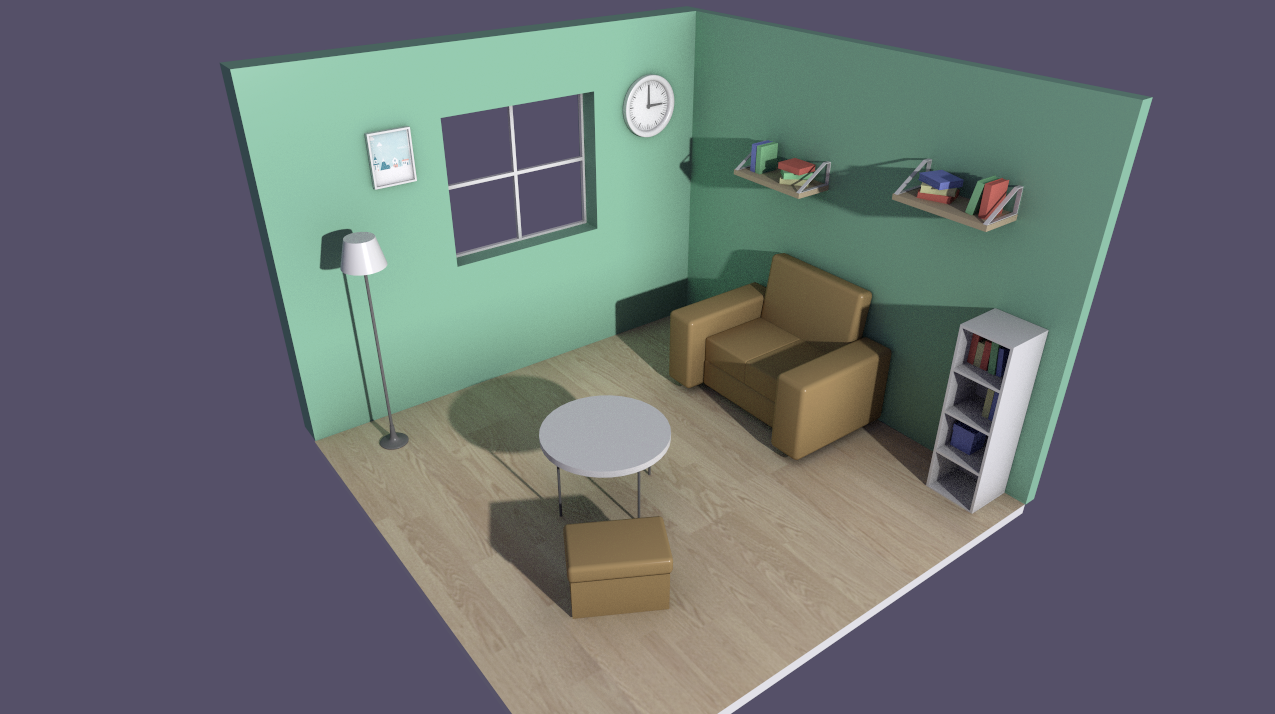

To show the possibilities of the Evergine raytracing low-level API, together with the Evergine launch we have created a path tracer demo that you can download from Github. This demo includes some interesting effects as soft shadows, ambient occlusion, global illumination, and antialiasing implemented using raytracing technology.

The next section explains how to each one of these effects were implemented:

First, the camera will throw a single ray per pixel, each ray can hit a surface or not. If the ray hits a surface the color result will get from the surface material and if the ray no-hit the result will be the background color.

Soft Shadows

To implement raytracing shadows is necessary to throw a new ray from every surface hit point to the light. If this new ray collision with something before reaching the light, the ray returns the value “in the shadow” otherwise returns the value “in the light”.

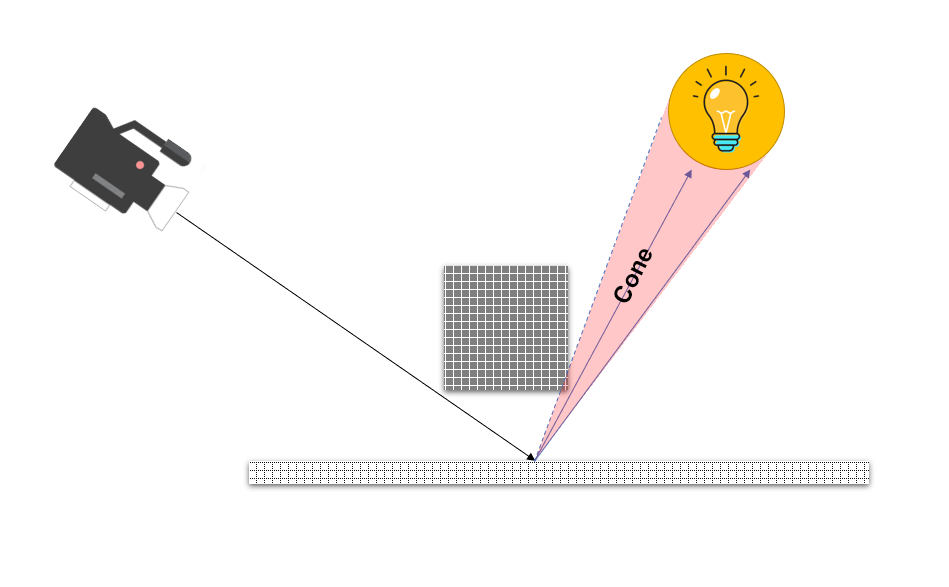

This is the simplest case and produces hard shadows but to produce soft shadows you need to add some more stuff. Our implementation consists in throw several rays from every surface point to the light (In this demo we use only a light source). The light is spherical for simplicity and the rays will be thrown from the surface hit point to the light forming a cone.

Then the shadow color depends on the combined result by each ray. The penumbra areas are the result when some rays return “in the shadow” while others return “in the light”.

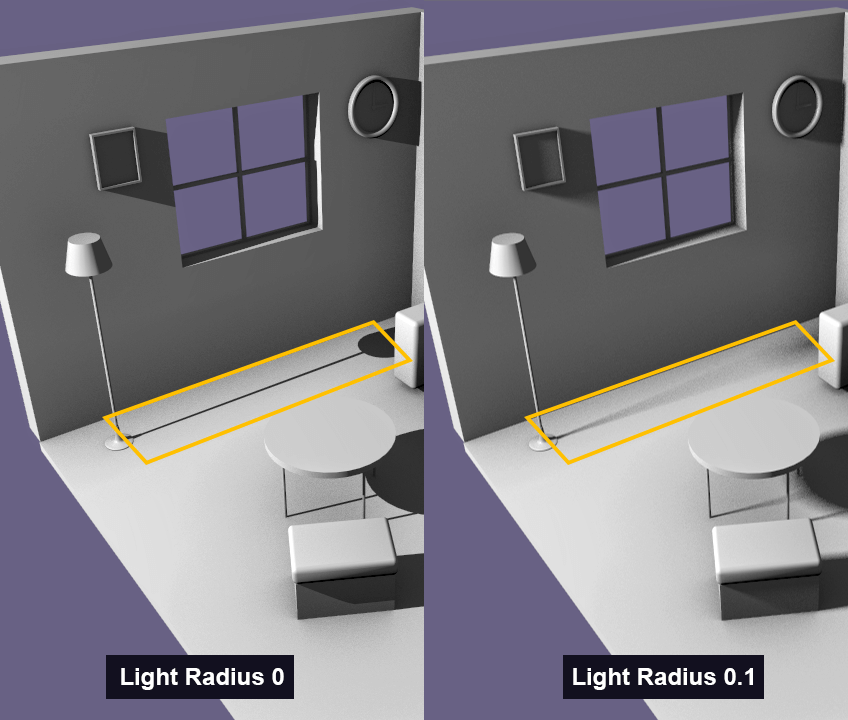

In the following picture is possible to see the penumbra areas comparing the shadows produced by the light using different light radius.

Ambient Occlusion

The ambient occlusion simulates areas that tend to block out or occlude the light because have a close object, hence they appear darker.

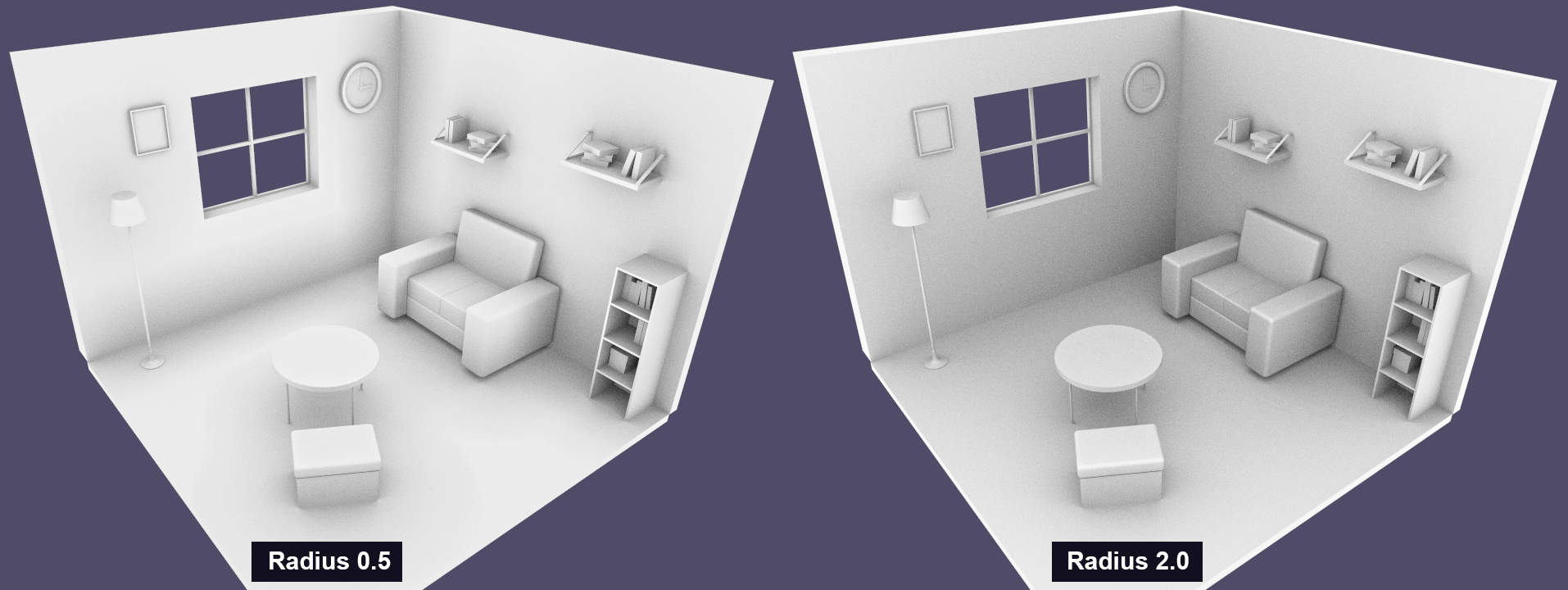

Our ambient occlusion implementation consists of throwing several short rays from the surface hit point in a hemisphere align with the normal of the surface. Like shadow rays, these rays detect if something is close to de point and occlude the light bounces.

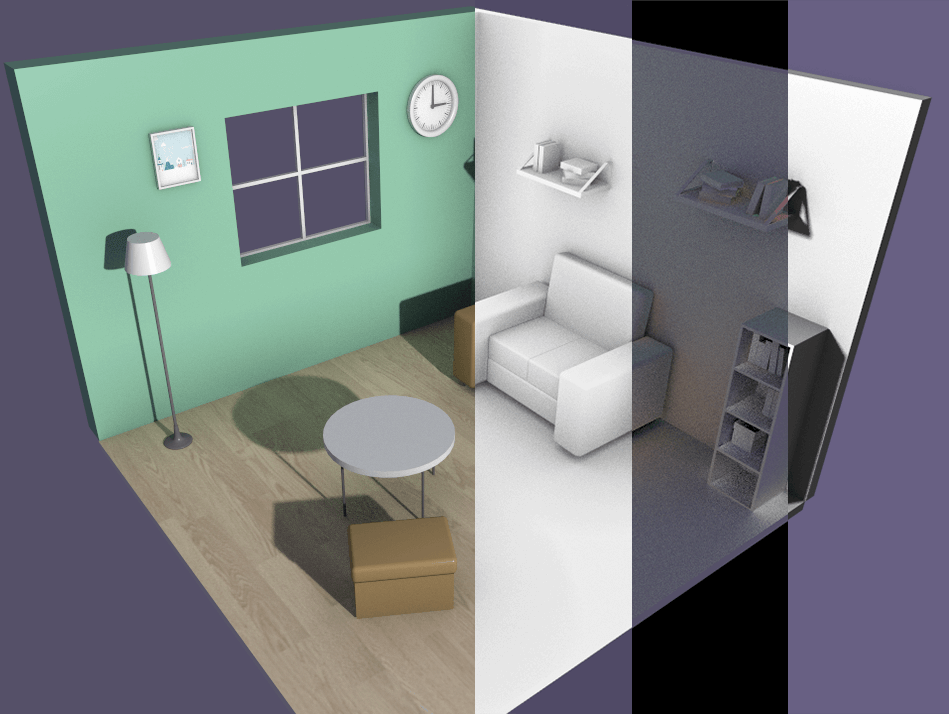

In the following picture is possible to see the result using different ambient occlusion ray lengths:

Global Illumination

The light behavior in the real world could be simulated emitting rays from the light source that will hit a surface. A portion of this ray energy is absorbed by the surface and another portion is reflected, creating a new ray in a recursive until all energy of the initial ray is absorbed.

With a bounce’s parameter, we can simulate how many times a light ray could be reflected by the surfaces. The color result by a bounce is added to the surface color, producing for example that a red object close to another contributes to tint red the close object.

In the following picture is possible to see the global illumination output where some areas are tinted by the close object colors.

Antialiasing

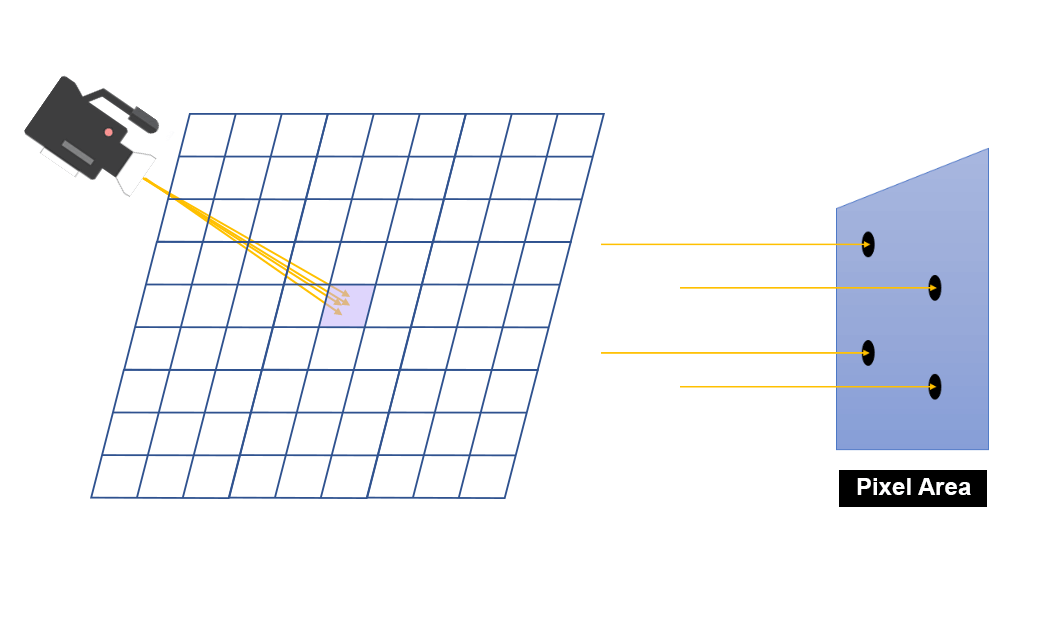

Antialiasing consists of removing jaggies of the image. These jaggies are produced by the limited pixels grid of the output image.

To get this goal we need to throw several rays from the camera per pixel and get an average color as result. When only a single ray per pixel is thrown, the camera throws a ray per pixel aligned to the center of this. To implement antialiasing several rays are thrown per pixel in a random direction inside the pixel. In our implementation, we are using a Halton sequence commonly used for these proposes.

The result of combined several rays per pixel is shown in the following picture:

Future work

Nowadays raytracing is a technology in continuous evolution, but the current result is very impressive and higher to rasterization render result. All modern graphics engine is in the process to update their render to use raytracing, but the integration cost is high and with the goal to continue supporting old graphics cards, the use of raytracing is only an alternative. A good portion of modern engines is implementing a hybrid render using rasterization and raytracing in their render.

Evergine is the first C# engine with raytracing integration. Our objective is to offer our users the last graphics technologies to use in their industrial projects or products.

By our roadmap, we will continue working on raytracing to add it to use in our high-level API and offer raytracing integration with Evergine Studio too.